Categories

November 21, 2013

GRIT Sneak Peek: How the Industry Really Feels About Change

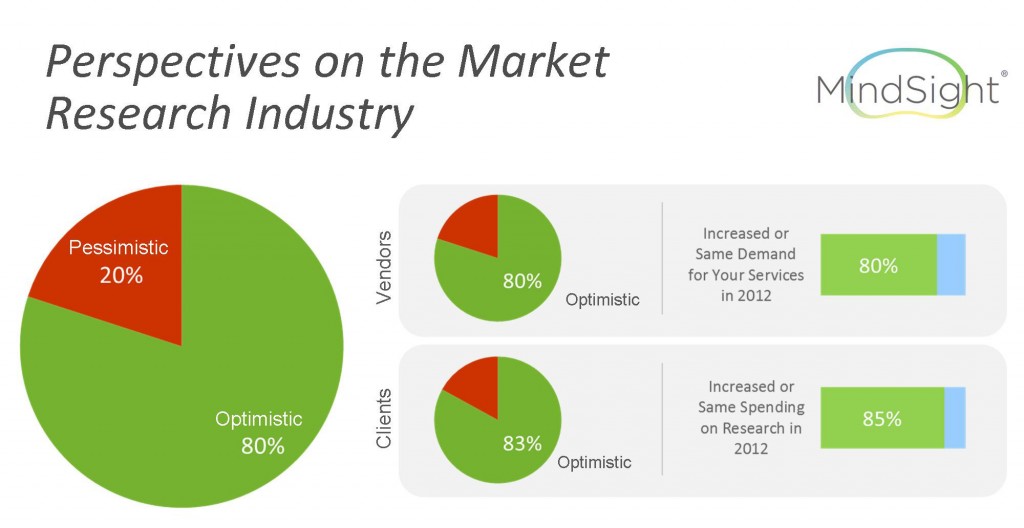

Here’s a take (at a high level) of how GRIT respondents feel about change. There are nuances here that we explore more deeply in the report.

0

By David Forbes & Lenny Murphy

It’s become a tradition to debut some sneak peeks at the results from the most recent wave of each GRIT Study on the GreenBook blog, and here is the first one from the Fall/Winter 2013 report. We have more planned over the next month or so until the report is published, but we wanted to start with one of the question areas that elicited the most respondent feedback during the study: the MindSight emotional measurement module.

We’re always looking for new ways to increase the depth and value of the insights in GRIT, and since the area of understanding the unconscious drivers of behavior is a particularly hot topic right now, we decided that for this latest round of GRIT that we would incorporate a technique to deliver that. We had a few criteria for consideration: it had to work within the structure of a survey, be device agnostic, would not require webcams, and would generate insights validated by a large body of knowledge. That led us to looking at implicit response tools, and since I was familiar with the MindSight product by Forbes Consulting and it covered all the bases outlined, we asked them to work with us. They graciously agreed.

We chose to focus our questions about understanding how research pros feel about change. Not their stated, explicit, rational views but rather the unconscious emotional reactions to changes in the industry. With that goal in mind we built a module that we thought would shed some light on how folks really feel. The results are enlightening and surprising, as you’ll see a bit later in this post.

When we reviewed the respondent comments at the end of the survey, there were quite a few folks who didn’t seem to understand what were trying to do with this type of tool. Perhaps it was due to unfamiliarity with implicit response methods or disagreement with the approach in general, but there was obviously a fair amount of confusion about that part of the survey.

With that in mind, before we dive into the results we thought it would be helpful to provide an explanation of the MindSight technique. Forgive us in advance if this seems promotional; that is not the intent. We simply can’t explain the approach without talking about it.

MindSight is a proprietary product that uses a bit of applied neuroscience to uncover authentic emotional insight that respondents might not be able to come up with on their own, and gets “under the radar” of the conscious mind and its natural editing functions.

There are 3 foundational elements of MindSight – the Emotional Discovery Window, the MindSight Motivational Model, and the MindSight Image Library.

Respondents are presented with visual stimuli and responses are elicited in a very short time frame (the Emotional Discovery Window) that precludes conscious editing. This timing is derived from research on the neuropsychology of emotion in response to visual imagery (Damasio, 2010).

Visual stimuli for the MindSight assessment are validated to evoke the nine types of feelings specified in the MindSight Motivational Model (Forbes, 2011). The model organizes nine concepts derived from past research to create a comprehensive “unified model” of motivation.

The MindSight Validated Image Library contains hundreds of images that are extensively validated to evoke the feeling of fulfilling (or failing to fulfill) one of the nine core motivations in the Unified Model.

In actual MindSight research protocol, respondents are presented with a priming sentence that frames the focus of the exercise, and then asked to do a “sentence completion task” where they complete the sentence by choosing all of the pictures that complete the sentence.

So there is our primer on the model behind MindSight. We hope that clarifies things for folks who didn’t get it. Now on to the results!

GRIT RESULTS

CHANGE IS GOOD — WE WILL SURVIVE!!

Despite the frequency of talk about “evolve or perish”, and near universal sentiment that the market research is undergoing significant transformation, it seems that the great majority of us are optimistic about the direction the industry is headed. Reminds me of that infamous Gloria Gaynor song — come on, you know the words…

At first, I was afraid, I was petrified

Kept thinking, I could never live without you by my side

But then I spent so many nights thinking how you did me wrong

And I grew strong, and I learned how to get along

OPTIMISTS FEEL GOOD ABOUT THEIR PERSONAL PROSPECTS, AND THE PROSPECTS FOR THE INDUSTRY AT LARG

There are clearly two ways of confronting impending change – one is all about the prospect for achievement and the other is about the prospect of failure. For Optimists, the emotional expectations of change are seen as opportunities for personal growth. This growth can take the form of creating success in the workplace – and for being rewarded for that success. Optimists also see this change as offering distinctive opportunities to distinguish oneself within the industry – by innovating and standing out from the crowd.

Interestingly, for Optimists, market transformation isn’t just about individualistic gains, but also about progress for the research community as a whole. Optimists see the challenges of the industry as a force that can bring a research community together – creating opportunities to work cooperatively and in harmony.

PESSIMISTS FEAR FAILURE AND DISENGAGE

By contrast, Pessimists have a different set of emotional expectations about their prospects in a changing industry. They are generally insecure about the future – feeling at risk and vulnerable – and have a fear of failure – being defeated by industry change. In the end, Pessimists respond to the stress and insecurity about the future by retreating (remember fight or flight?) These individuals expect to react to the future by disengaging from the research community – feeling unproductive, ineffective and ultimately disinterested.

So there is our take (at a high level) of how GRIT respondents feel about change. There are nuances here that we explore more deeply in the report and we correlate them with other measures in the survey to provide deeper context and insights.

The GRIT report will be published in January; stay tuned for more sneak peeks between now and then!

Disclaimer

The views, opinions, data, and methodologies expressed above are those of the contributor(s) and do not necessarily reflect or represent the official policies, positions, or beliefs of Greenbook.

Comments

Comments are moderated to ensure respect towards the author and to prevent spam or self-promotion. Your comment may be edited, rejected, or approved based on these criteria. By commenting, you accept these terms and take responsibility for your contributions.

More from Leonard Murphy

CEO Series

Unveiling the Human-Centric Research Revolution with GoodQues

Uncover GoodQues' dedication to a "human-first" approach, emphasizing comfort and authenticity. Utilizing tech to prioritize quality and human-centric...

CEO Series

From Rockstar Dreams to AI Insights: The Journey of Hamish Brocklebank

Dive into the CEO Series with guest Hamish Brocklebank, CEO of Brox.AI. Explore his path from music ...

CEO Series

AI Integration and the Future of Marketing Insights with Alex Hunt, CEO of Behaviorally

Explore the power of AI in marketing with behaviorally's CEO, Alex Hunt. Learn how to leverage predi...

Research Technology (ResTech)

The Next Wave of Disruptive Technology that Changes Everything

There have been a few big inflection points of societal disruption driven by technology in the last 50 years: One was the introduction of the Internet...

ARTICLES

Top in Quantitative Research

Research Methodologies

Moving Away from a Narcissistic Market Research Model

Why are we still measuring brand loyalty? It isn’t something that naturally comes up with consumers, who rarely think about brand first, if at all. Ma...

Sign Up for

Updates

Get content that matters, written by top insights industry experts, delivered right to your inbox.

67k+ subscribers