Categories

November 30, 2015

The Sad State Of Mobile-First Market Research: A GRIT Sneak Peek

In this sneak peak at the forthcoming GRIT Report, we look at how research suppliers and research clients are adapting to mobile research.

0

Also new to this wave of GRIT was a series of questions about how GRIT respondents are adapting to mobile. This is an excerpt from the rough draft (the charts will be much prettier in the report!) on the findings from that area of exploration in the study.

First, we asked how many of their surveys are designed for mobile. This was a verbatim response where they were asked to enter a whole number, and for ease of analysis we have grouped responses into five buckets.

The results show that we still have a ways to go. 45% of suppliers and 30% of clients indicate that between 75% and 100% of all surveys they deploy are designed for mobile participation, but that leaves well over 50% of all surveys NOT mobile optimized.

A few stark differences standout between clients and suppliers, perhaps understandably so. If we accept the proposition that buyers of research expect suppliers to drive best practice adoption for all studies deployed through them, then the higher percentage of suppliers ensuring that the majority of surveys they field is good news, however it’s still a minority overall.

The numbers don’t look much better when looking at the total sample (N=1,497), with respondents citing their percentage of mobile optimized surveys being deployed as 0 – 13%; 1 to 25 -22%; 26 to 50 – 15%, 51 to 75- 8% and 76 to 100 – 42%. The bottom line is that only about 50% of all surveys are designed to be mobile. That is progress to be sure, but we as an industry still have much work to do to fit well in a mobile-first world.

In the next question, perhaps we uncovered the reason why mobile is still not the first thing surveys designers focus on: the average length of surveys being fielded.

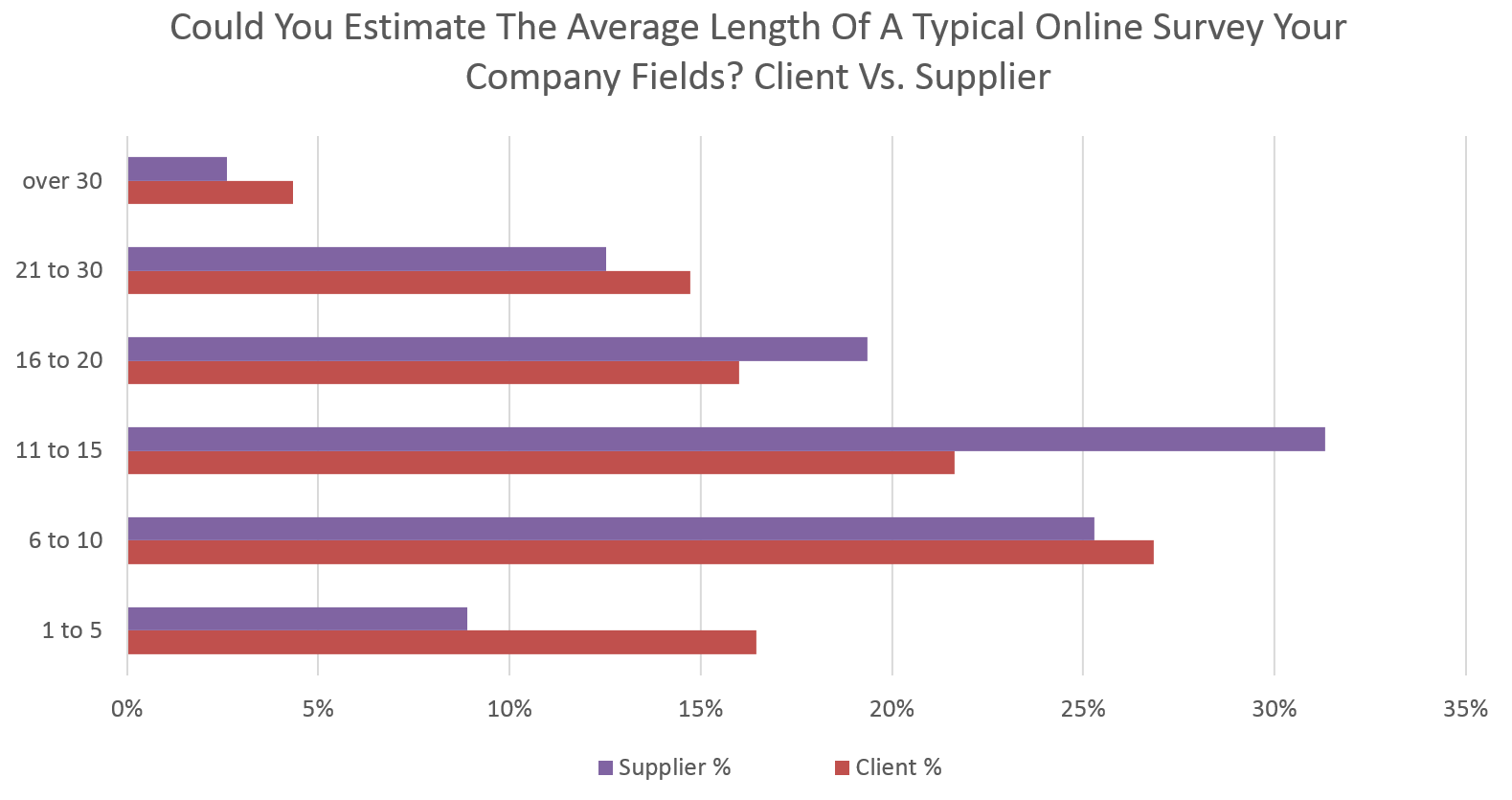

In general, roughly one out of three of all GRIT respondents fall into one of three groups: surveys of less than 10 minutes, of between 11 and 15 minutes, or over 16 minutes. To dive a bit deeper, here is a full breakdown of the specific brackets we identified:

Perhaps most surprisingly is the finding that clients in a two to one ratio are reporting fielding surveys of less than 5 minutes, which is a hopeful sign to be sure, although suppliers seem to be the culprits in conducting longer surveys of between 11 and 20 minutes.

Overall, when averaging all responses the average length of survey is 15 minutes, but as the range of responses clearly show over 1/3 of all surveys are still over that number, almost by default that means they are likely unsuited for a mobile participant.

Finally, we asked GRIT participants what they thought the maximum length of an online survey should be. Importantly, we did not ask specifically what the maximum length should be on a mobile device, so it is perhaps our own oversight in making that clear and it is possible results might have been different if we had explicitly stated that. However, since this question was clustered with the previous question specifically related to mobile, we do expect that mobile was at least a consideration in their responses. Regardless, the results could perhaps best be summed up with “whatever we answered previously as the average length, is the best length”, since the results do in fact closely mirror one another overall, although a higher percentage of research clients felt that under 10 minutes was ideal vs, what is currently being deployed.

Again, when averaging all responses, 15 minutes is the result, which also corresponds to the largest response category.

Considering the myriad studies by both panel and survey software providers presented in whitepapers, at events, via webinars, etc.. that support the idea that the optimal length of a surveys in a mobile-first world is less than 10 minutes, it’s encouraging to see that 52% of research clients report that as the ideal, while only 36% of suppliers report a similar goal, with fully another 32% of suppliers stating between 11 and 15 minutes is the ideal.

Often we hear suppliers stating that their inability to migrate to a mobile first or shorter survey design is due to client demand. Certainly GRIT data shows that this might be true to some extent, but a large contingent of clients seem to be embracing shorter mobile optimized surveys (which also likely explains the rapid growth of many suppliers such as Google Consumer Surveys for example).

For this round we also tracked participation by mobile vs. PC and the results are telling: Almost 40% of GRIT respondents participated via a mobile device (phone, tablet or “phablet”) with the remainder via a desktop/laptop. GRIT was optimized to be device agnostic in terms of the survey design, although the length of survey was still around 15 minutes, but interestingly the drop-off rate was higher among PC/Laptop users, indicating that a “mobile first” design can mitigate completion rates and increase respondent engagement.

Based on our own sample it must be pointed out that it is increasingly important to account for a large percentage of mobile device users within any sample, whether it be B2B or consumer.

Perhaps the key implication here is that suppliers who aren’t leading with a mobile optimized and shorter solution to client requests are doing the client, themselves, and the industry a disservice.

Disclaimer

The views, opinions, data, and methodologies expressed above are those of the contributor(s) and do not necessarily reflect or represent the official policies, positions, or beliefs of Greenbook.

Comments

Comments are moderated to ensure respect towards the author and to prevent spam or self-promotion. Your comment may be edited, rejected, or approved based on these criteria. By commenting, you accept these terms and take responsibility for your contributions.

More from Leonard Murphy

CEO Series

From Rockstar Dreams to AI Insights: The Journey of Hamish Brocklebank

Dive into the CEO Series with guest Hamish Brocklebank, CEO of Brox.AI. Explore his path from music ...

CEO Series

AI Integration and the Future of Marketing Insights with Alex Hunt, CEO of Behaviorally

Explore the power of AI in marketing with behaviorally's CEO, Alex Hunt. Learn how to leverage predi...

Research Technology (ResTech)

The Next Wave of Disruptive Technology that Changes Everything

There have been a few big inflection points of societal disruption driven by technology in the last 50 years: One was the introduction of the Internet...

Insights Industry News

Quantifying the Impact of Insight Innovation

We previously announced the milestone of our Insight Innovation Exchange (IIEX) conference series’ 10th anniversary, celebrating a decade of identifyi...

ARTICLES

Top in Quantitative Research

Research Methodologies

Moving Away from a Narcissistic Market Research Model

Why are we still measuring brand loyalty? It isn’t something that naturally comes up with consumers, who rarely think about brand first, if at all. Ma...

Sign Up for

Updates

Get content that matters, written by top insights industry experts, delivered right to your inbox.

67k+ subscribers